// POST /der/model/predict/{model_id} — Send OCR output (rec + det), model ID in path

POST /der/model/predict/{model_id}

Content-Type: application/json

{

"rules": {},

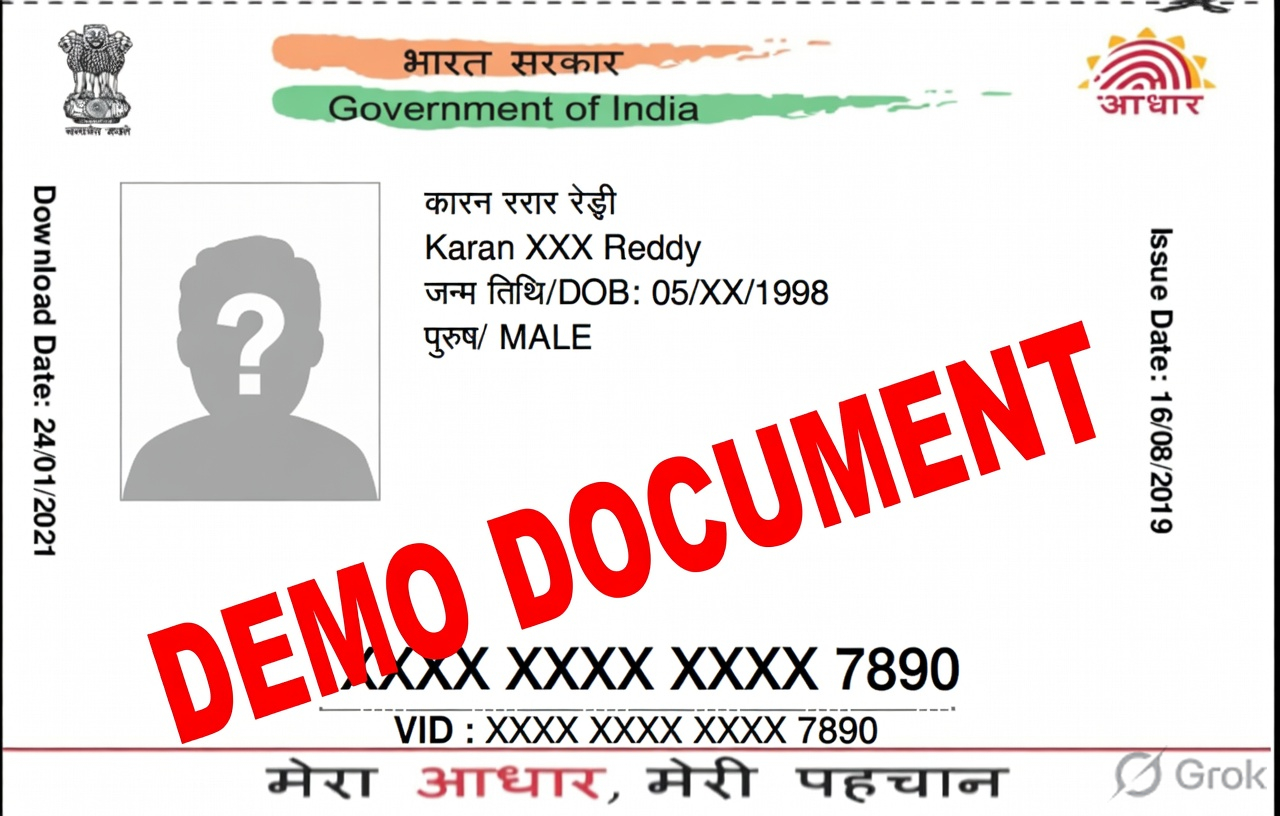

"rec": [

"Karan XXX Reddy",

"जन्म तिथि/DOB: 05/XX/1998",

"पुरुष/ MALE",

"XXX XXXX XXXX 7890"

... // full OCR text array

],

"det": [

[[426,230],[704,230],[704,265],[426,265]],

[[422,273],[828,273],[828,306],[422,306]],

... // bounding boxes for each word

],

"width": 1280,

"height": 816

}

─────────────────────────────────────────

// Response (default — layout output)

{

"width": 1280, "height": 816,

"boxes": [

{ "label": "name", "text": "Karan XXX Reddy", "score": 0.939, "box": [[426,230],...] },

{ "label": "dob", "text": "05/XX/1998", "score": 0.955, "box": [[422,273],...] },

{ "label": "uid", "text": "XXX XXXX XXXX 7890", "score": 0.966, "box": [[372,647],...] }

]

}

// Add ?format=simple for clean key-value output

{ "name": "Karan XXX Reddy", "dob": "05/XX/1998", "gender": "MALE", "uid": "XXX XXXX XXXX 7890" }